Paleolithic Emotions, Medieval Institutions, and Godlike Technology

E.O. Wilson gave us the diagnosis. Most organizations are still ignoring it.

A few weeks ago, I finished reading The Origins of Creativity by the late Edward O. Wilson, the Harvard evolutionary biologist who spent a lifetime trying to understand what makes us human. I had been meaning to read it for years. I finally picked it up because of a single quote I kept encountering, a quote Wilson had been making in lectures and interviews for more than a decade before it became suddenly, uncomfortably relevant.

He said it plainly:

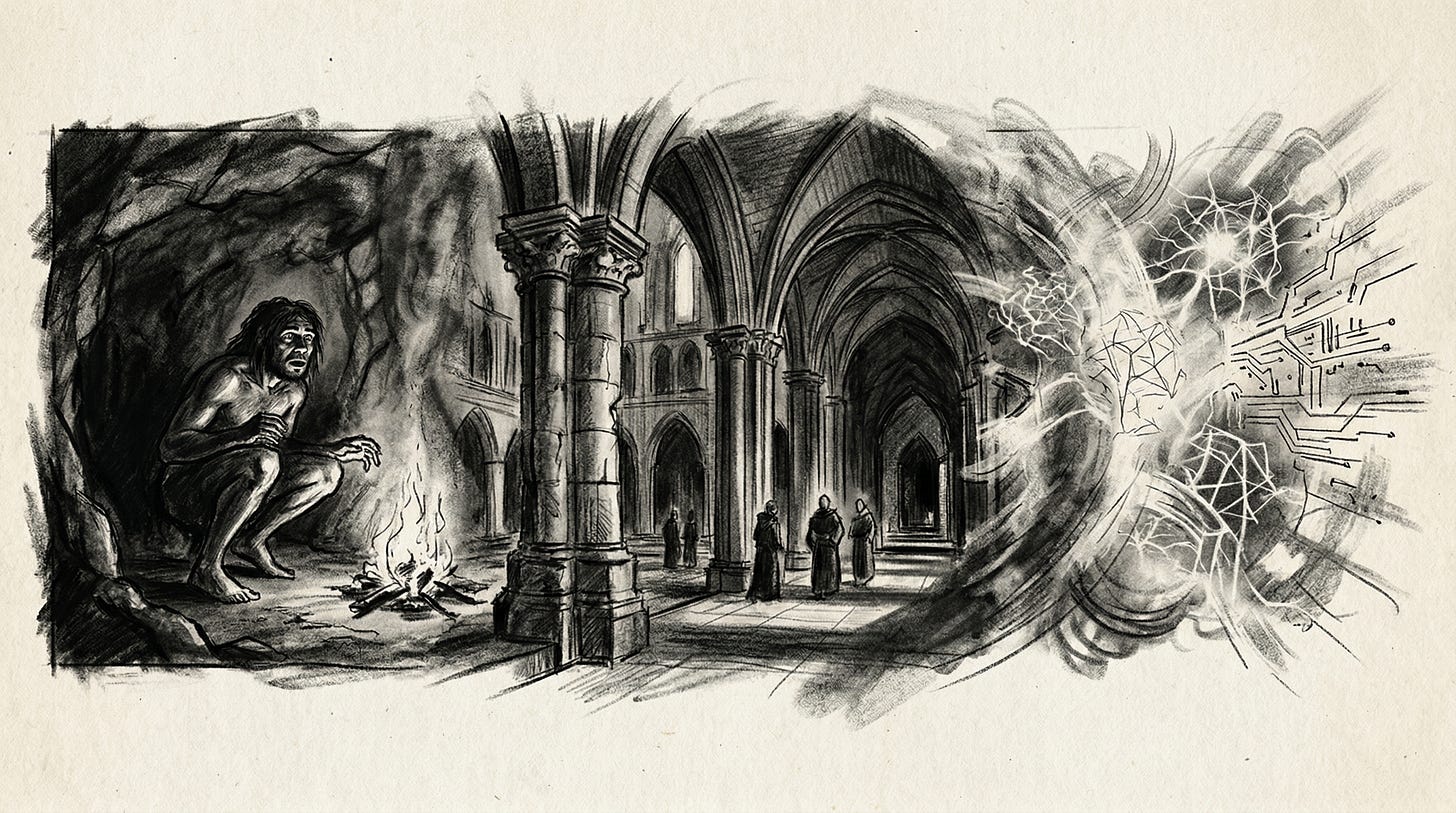

“We have Paleolithic emotions, medieval institutions, and godlike technology.”

Wilson wasn’t writing about artificial intelligence specifically. He was writing about the fundamental mismatch at the core of the human condition: that our emotional wiring, our organizational structures, and our technological capabilities operate on wildly different timescales. Our brains evolved over hundreds of thousands of years. Our institutions evolved over centuries. Our technology evolves over months. And right now, the gap between the third item and the first two is accelerating into something that demands a response from every leader who is paying attention.

The question isn’t whether AI is powerful. It obviously is. The question is whether the culture and institutions inside your organization are capable of wielding that power with wisdom. And when I look at the evidence, from running B:Side to teaching students at ASU, I don’t think most organizations have seriously grappled with what Wilson was telling us.

Three Clocks Running at Different Speeds

Wilson’s formulation is elegant because it names three separate problems that usually get treated as one.

The first is our Paleolithic wiring. Human cognition evolved for small groups of roughly 150 people, moving across the African savanna, responding to immediate physical threats, competing for status and resources in real time. Evolutionary psychologist Robin Dunbar identified 150 as the natural limit of stable human social relationships, the number at which our brains can track trust, reciprocity, and reputation. We are still, neurologically, tribal creatures. We anthropomorphize. We react to perceived threats faster than we reason about them. We discount the future in favor of the present. Daniel Kahneman spent a career documenting this: our System 1 thinking is fast, intuitive, and riddled with biases that made sense on the savanna but are actively dangerous in complex modern organizations. We trust what feels familiar. We fear what feels novel. We mistake busyness for progress and confidence for competence.

The second problem is our institutional architecture. Wilson called it “medieval” and he wasn’t being hyperbolic. The hierarchical structures that govern most organizations, rigid chains of command, annual planning cycles, siloed departments, risk-averse approval processes, were designed for a world that no longer exists. They were built to create predictability and control in slow-moving environments. They are not designed for speed, adaptation, or ethical complexity at scale. And here is what the data says: they have been getting worse, not better. The Edelman Trust Barometer, which has tracked institutional trust globally for two decades, describes the current period as a trust “avalanche.” Governments, media, universities, corporations: all declining. The institutions that were already insufficient for the pace of the twenty-first century have been further hollowed out by a decade of polarization, algorithmic noise, and compounding crises.

The third problem, the godlike technology, needs almost no introduction in 2025. Generative AI is not an incremental improvement. It is a capability discontinuity. Systems that can perform at or above expert human level across knowledge work, code, analysis, communication, and decision support did not exist in any meaningful form five years ago. They are now available to every organization on the planet, at commoditized cost. As Tristan Harris of the Center for Humane Technology has put it, we now have technology that can match or outperform us in domains we thought were exclusively human, running on infrastructure optimized to capture attention and accelerate adoption, crashing into the civilization we built before any of this was possible.

Three clocks running at three different speeds. That’s the problem.

We Have Seen This Before

This is not the first time in history that technology has dramatically outpaced the culture and institutions designed to manage it.

In the early decades of the Industrial Revolution, factories appeared almost overnight. Steam power transformed production on a timeline that made existing social structures look like they were standing still, because they were. Child labor, sixteen-hour workdays, industrial accidents that killed workers by the thousands: none of these were the inevitable outcomes of industrialization. They were the outcomes of industrialization running ahead of the institutions designed to govern it. The technology existed. The wisdom to deploy it responsibly did not catch up for decades. And in the lag between those two things, real human suffering accumulated.

The labor movement, factory reform legislation, public health standards, worker protections: these didn’t emerge spontaneously from the technology itself. They required deliberate, painful institutional construction. They required leaders, inside and outside of government, who were willing to acknowledge the gap between capability and wisdom and then do the unglamorous work of closing it.

What the Industrial Revolution teaches us is not that technology is dangerous. It is that the gap between capability and governance is where the damage happens. And the organizations that navigated that era well were the ones that built strong internal cultures and governance structures before they were forced to by law or catastrophe.

We are in that lag right now. The question is how long we let it run.

The Campfire Nobody Is Tending

Here is what I am watching in real time, and it is not abstract.

At B:Side, we move capital into small businesses. Every credit decision is a judgment call that sits at the intersection of data, human context, and institutional values. AI can process the data faster than any analyst on my team. It cannot replicate the judgment, and more importantly, it cannot replicate the accountability. When something goes wrong with a loan decision made by a machine, the question of who owns that outcome is not theoretical. It is immediate and it is consequential. The organizations I watch navigate this well have invested heavily in the culture that surrounds the technology: clear values, defined limits, decision rights that are explicit rather than assumed. The ones struggling are deploying capability into an institutional vacuum and hoping for the best.

At ASU, I watch students trying to figure out what skills will still command value in five years. The ones who seem best positioned are not the ones who have learned the most AI tools. They are the ones with the deepest foundation in judgment, communication, ethical reasoning, and interpersonal trust, the capabilities that are not automated by the same systems that are automating technical tasks. That gap, between what the technology can do and what the human needs to provide, is the institutional design challenge of our era.

Wilson spent his career documenting how human creativity and culture emerged from what he called the campfire: the shared space where our ancestors told stories, built trust, adjudicated disputes, and transmitted values across generations. The campfire was the original institution. It was where the social contract was forged, not in legal documents, but in the lived practice of people who depended on each other being honest about what they saw. What Wilson was warning us about, decades before the current AI wave, is that we have been building algorithms without tending the campfire.

The campfire is still there. Most leaders just aren’t spending enough time at it.

What Leaders Can Actually Do

The answer to Wilson’s diagnosis is not to slow down AI adoption. It is to accelerate institutional development at the same speed. Here is how that translates into practice:

First, treat culture as infrastructure, not decoration. The organizations navigating AI well, companies like Microsoft and Unilever, have made culture and governance investment as fundamental as technology investment. This means dedicated AI oversight functions, cross-functional ethics councils, and executive accountability for how AI is deployed, not just whether it performs. Culture is the operating system. AI is an application running on top of it. If the operating system is weak, the application will corrupt.

Second, build the campfire deliberately. High-trust teams that deliberate together, that have developed shared norms for how to reason about hard decisions, are better equipped to exercise judgment when AI introduces complexity or ambiguity. This means investing in the quality of team dialogue, not just the volume of it. Psychological safety, the ability for people to raise concerns without fear, is not a soft HR concept. It is the mechanism by which institutions catch errors before they become catastrophes.

Third, design for long-term thinking in a short-term environment. Our Paleolithic wiring biases us toward immediate rewards and visible threats. AI deployment decisions often involve tradeoffs that are years out: reputational risk, regulatory exposure, talent dynamics, cultural erosion. Build the governance structures and incentive systems that counter that bias. Tie leadership compensation to long-term outcomes, not just quarterly metrics. Create deliberation processes that slow decisions down when the stakes are high, even when the technology moves fast.

Fourth, invest in AI literacy at every level, not just at the top. Institutional wisdom is distributed. The people closest to day-to-day operations are often the first to notice when something is going wrong. They need enough understanding of AI systems to recognize anomalies, surface concerns, and participate meaningfully in governance conversations. This is not about turning everyone into a machine learning engineer. It is about creating the shared vocabulary that allows an organization to reason collectively about powerful tools.

Fifth, name your bright lines before you need them. The organizations that maintain integrity under pressure are not the ones that reason from scratch when a crisis arrives. They are the ones that have already thought through the hard cases. What will you not do with AI, regardless of competitive pressure? Where does the line sit? Having that conversation now, writing it down, building it into your institutional DNA, is the campfire work of our moment.

The Upgrade Is on You

Wilson called it “terrifically dangerous, and now approaching a point of crisis overall.” He said that in 2012. He would be more emphatic now.

But here is what matters. Every one of Wilson’s three elements is, in principle, addressable from the inside out. We cannot rewire our Paleolithic brains overnight. But we can build institutions that compensate for our cognitive limitations. We can create cultures that make long-term thinking more likely, that make ethical reasoning more practiced, that make the deployment of powerful tools more deliberate and values-aligned.

The industrial age produced reformers who understood that technology without institutional wisdom is not progress. It is just acceleration. The people who shaped the better outcome were not the ones who slowed the technology down. They were the ones who built the governance structures capable of channeling it toward human ends.

That is the work in front of every leader right now. The campfire is still there. The question is whether you are tending it.

Honor Under Pressure is available now. The full series, along with ongoing resources and community for leaders navigating the Fourth Turning, lives at www.thefourthturningleader.com.